The 2017 Global Consumer Pulse report by Accenture states that 61% of customers around the world and 78% in emerging markets such as India switch companies due to bad customer experience.

Since one of the primary objectives of deploying an Intelligent Virtual Assistant (IVA) solution is to enhance customer experience (CX) for enterprises, it is crucial to ensure that IVAs are equipped with the capabilities to deliver on that goal.

AI approaches today understand human language and handle contextual responses in spectacularly different ways. Although we are constantly improving, AI assistants do commit errors. Natural language predictions can go wrong but how we manage these scenarios is crucial to ensure good CX.

As Conversational AI developers, we should not be leading customers to failing conversations. Instead, a good IVA should be able to understand user intent, ensure that the conversation stays on track, and ensure that the customer has a positive experience with the brand.

For Intelligent Virtual Assistants built on the Haptik platform, we work backwards to cover scenarios where a customer might get stuck or receive an irrelevant response. Enterprises often cannot predict customer expectations. This might hamper their CX over time, as end-users expect an effortless and smooth experience while interacting with a brand’s AI assistants.

As part of our continuous effort to enhance the customer experience delivered by our IVAs, we recently added a new feature to our Natural Language Understanding (NLU) engine – Disambiguation.

What is Disambiguation?

Disambiguation basically means the removal of ambiguity by making something clear. While communicating with someone, when you find ambiguity in their response, you would prod the other person with a follow-up question. We have used the same first principles of human conversation and built it as part of the Haptik NLU engine.

Intent Mismatch Scenarios Solved with Disambiguation

From your customer conversation data, you could get instances of partial or incomplete messages sent to the Intelligent Virtual Assistant. And somehow the NLU (Natural Language Understanding) has to interpret the full context from the customer. This issue is even more rampant when dealing with conversational topics that are more confusing for your end customers. Let us take a look at a few interesting examples below:

While interacting with an e-commerce IVA, a customer types “Price”. Now, how would the IVA understand if user wants to know the price for product A or B?

Another example is a telecom company IVA, which could go two ways when customer says “Recharge”. The two intents that match closely are:

1. Recharge failed

2. My Recharge

To resolve ambiguity in responses and enable our Conversational AI platform to respond accurately, we embedded a Disambiguation logic in our NLU core. The power to disambiguate poorly constructed user messages can make IVAs work 10X better as compared to traditional chatbot platforms.

But, how did we arrive at this problem?

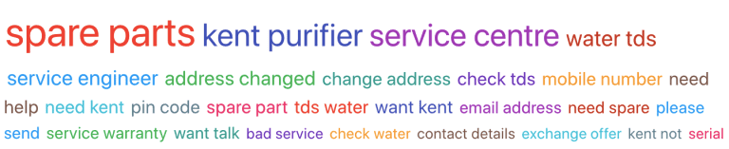

We observed a trend of frustrating, head-scratching conversations and gathered insights from our AI Analytics system.

Disambiguation is a way to guide customers to the correct intent even when they send an ambiguous message. Let’s take an example of a Mutual Fund awareness IVA, which answers questions around mutual fund concepts. Say, the customer sends a single word response “SIP”. Now there can be multiple intents tied to that single word. Instead of choosing the top response, we could suggest intents relevant to the user. For example, there could be three options for the customer:

1. What is SIP?2. Benefits of SIP

3. Start a SIP

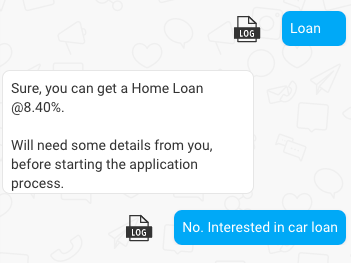

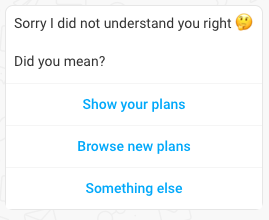

Most IVAs send a single response based on the intent that gets matched with a highest confidence score. However, we should ideally be guiding the customer by sending probable top matches. Let’s take an example where the user sends “plans” as a single word response. With an IVA’s disambiguation capability, it can respond as in the image below –

As you can see above, instead of leading the customer to a wrong path, we provide them with intelligent suggestions. Now, the customer could either check their own plans or browse new plans.

Disambiguation Keeps Conversations on Track

A common reason for failing conversations are wrong classifications of messages. One of the reasons for this might be lack of training data, which can simply be fixed by adding more training data.

Intent match errors, such as the ones we discussed earlier, are harder to handle. This is where our Disambiguation feature helps. We check whether the Haptik NLU engine, responsible for understanding messages, was able to classify the message confidently. If the classification confidence does not meet certain thresholds, we would trigger a disambiguation message.

We realized the need to probe against a response only in some specific scenarios. The problem statement we started with was simply to not lead the user down the wrong path, thus avoiding bad user experience. Our solution puts the onus on the Haptik Conversational AI platform to capture course correction scenarios – and then trigger Disambiguation to detect the correct user intent.

The Disambiguation feature has been highly effective for us since its implementation, with a 70% success rate. This means that Disambiguation has enabled Haptik IVAs to understand users 70% of the time in cases where the user would otherwise not have been understood at all. Overall, Disambiguation has contributed to a 16% reduction in conversation breaks caused by vague or ambiguous user inputs.

Needless to say, Disambiguation will go a long way towards ensuring that our Intelligent Virtual Assistants are well-equipped to understand the user’s intent without any ambiguity and execute the tasks required to successfully provide end-to-end query resolution.

It may sound obvious, but there’s no denying the simple fact that better customer experience leads to happy customers, and happy customers are precisely what every brand needs! Haptik has been in the business of building conversational solutions for over 6 years now, and in that time we’ve observed that enhancing CX is key to boosting ROI for any enterprise. This has shaped our own approach towards developing best-in-class IVA solutions.

This article has been penned by Nikunj Sharma, Product Manager – Platform at Haptik.

Interested in an IVA solution that will enhance CX for your brand?

.png?quality=low&width=352&name=Untitled%20design%20(31).png)

-1.png?width=352&name=BlogHeader2%20(3)-1.png)

.png?width=352&name=image%20(18).png)

-2.png?quality=low&width=352&name=image%20(11)-2.png)

-1.jpg?width=352&name=Linkedin+%20Twitter%20(1)-1.jpg)

-1.png?width=352&name=LinkedIn%20(1)-1.png)

.png?quality=low&width=352&name=LinkedIn%20(3).png)

.jpg?width=352&name=sentiment%20(1).jpg)

source on Google