At Haptik, we have a platform that clients use to create chatbots to interact with their customers. Although each chatbot serves a different business use case, sensitive PII data handling during a conversation is a common problem across the system. On a day-to-day basis, users share their personal and sensitive data such as phone numbers, credit card numbers, sensitive documents, addresses, and many other details on various portals, and securing personal data is paramount to our business.

Personal data could get compromised if there is any breach in a system. Suppose an attacker gains access to the sensitive data of an organization. In that case, they could coerce them to pay a sum of money in return for access to their own data, or leak that data publicly for monetary gain. Such a breach would lead to massive reputational and financial loss for the organization.

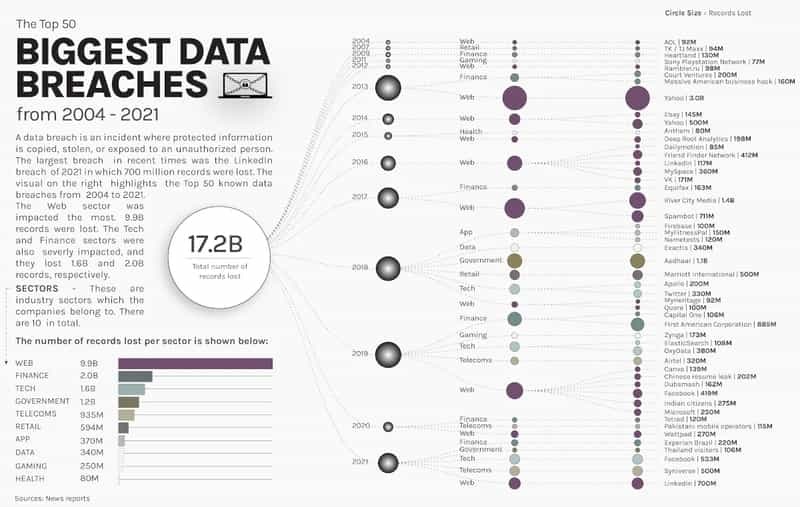

In the past, there have been significant data breaches that happened with even large organizations.

Read more: What you need to know about chatbot security

As a SAAS company, Haptik believes in developing and enforcing strict security policies and procedures including, but not limited to, access control mechanisms such as user authorization and authentication, encryption of data, and isolation of important data from the public internet. We already support Data-Encryption-At-Rest to protect the data from any physical theft or unauthorized file storage access. While we have multiple other security measures in place to protect users’ data, we decided to add an extra layer of protection by individually encrypting secure users’ PII data.

Choosing a data encryption strategy may sound quite simple at first, but for an enterprise SAAS platform, it poses a unique set of challenges.

- Firstly, our platform is deployed in multiple regions and serves millions of requests that store and retrieve data from the database, so the encryption and decryption strategy need to be highly performant.

- Secondly, each user request stores multiple data points in the database, and thus historically we have billions of records in the database. These records need to be encrypted as well.

- Lastly, the strategy should not increase the code complexity, since encryption and decryption would happen in multiple places in the Platform.

Keeping the above challenges in mind, we explored a few solutions described below before finalizing one that fits our needs.

Encryption using Database functions

There are standard database functions - AES_ENCRYPT and AES_DECRYPT that are provided by MySQL and above to encrypt and decrypt data while saving and retrieving data respectively. These methods can be invoked from multiple places - from raw SQL queries to database procedures. These functions implement encryption and decryption of data using the official AES(Advanced Encryption Standard) algorithm. The AES standard permits various key lengths. By default, these functions implement AES with a 128-bit key length. Key lengths of 196 or 256 bits can be used. The key length is a trade-off between performance and security. The key needs to be provided as a parameter in the function invocation.

Refer to MySQL docs for more information

It is best applied when we are planning to store data from the first day of the platform as it does not provide a way to encrypt historical data out of the box. We would have to take up an activity to encrypt this data, and it would mean a database downtime for a few days.

Additionally, It is only acceptable if you are using raw queries to store or retrieve data from the database. Most of the time frameworks are not supported to run this type of query, Since we are using Django ORM for queries it is not supported by it.

Due to these reasons, we didn’t choose this approach.

Encryption using custom methods

A set of custom methods can be written for encryption and decryption separately, and they would be used wherever required. This method provides you with the flexibility to decide the choice of algorithm.

In terms of codebase management, we need to use this method in multiple places whenever we store or retrieve data for that table. This will increase the code's complexity and reduce its readability. Additionally, it adds a huge risk that if a developer forgets to use this method in all the required places it would lead to data inconsistency. These were the reasons why we didn’t choose this solution as is, instead, tried to figure out a way to make this solution more reliable.

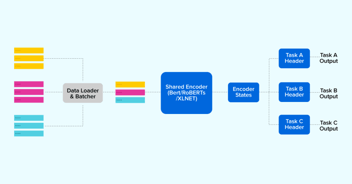

Encryption via ORM method overriding

In the above solution, using the encrypt and decrypt method at each required place had a risk of being missed easily, so we explored a solution where declaring these once per data point would work.

In Django ORM, we have created a new model field overriding the existing field. And replaced some methods that we use to insert or retrieve data from a table. Whenever we use an ORM query that passes through these methods and performs encryption or decryption activity. We are not doing anything on the database level as the database considers that encrypted value as a normal string and while retrieving that data, decrypt that data first if possible by using a secret key and return it in a readable format if it is not decryptable then return it as is. This solution handles both unencrypted and encrypted data during data retrieving time.

There are no major cons to this approach, but since the encryption and decryption would add some processing time irrespective of which approach we chose, we evaluated the performance impact of this change. Please refer to the appendix for detailed performance results.

Given there were no downsides to this approach, we finalized this approach.

How did we deploy this change to production/challenges

As a platform, we have a multi-region deployment. To reduce the surface area for this change and release in a controlled fashion, we deployed it in a single region first, before rolling it out in the other regions.

Additionally, we deployed decryption-related changes first so it will not affect historical data as well as newly encrypted data on data retrieval time. The main reason to do this is that we have multiple instances for request handling on a single region. So it takes some minutes to deploy and restart on all those instances and in between that time if the user tries to insert any data it will get inserted in an encrypted format but the user can see that encrypted data during the chat, which is wrong, to avoid that situation we deployed decryption logic first.

Secondly, we deployed encryption-related changes so from then onwards every new chat entry those are inserted in the database will be in an encrypted format.

After successful testing on a single region, we did that on multiple other regions. For older data, we encrypted that using some manual activity by updating using the script in batches so it will not make any impact on existing system performance.

This way we added an extra layer of security for users' PII data on our platform.

Appendix

Performance impact of Approach 3

1. Impact on data insertion after changes

2. Impact on data retrieval after changes

In conclusion, securing PII data is of utmost importance in today's digital age where data breaches and cyber threats are becoming increasingly common. Organizations must implement robust security measures, such as encryption, access controls, and regular audits, to protect their customers' sensitive information.

Furthermore, it is crucial to remain vigilant and up-to-date with the latest security practices to ensure the continued safety of PII data. By adopting a comprehensive approach to data security, organizations can build trust with their customers and safeguard their reputations in the long run.

.webp?width=352&name=Charles-Proxy%20(1).webp)

source on Google