Your customer called at 9 PM. They waited through 3 IVR menus, except they didn't find the option they needed and hung up.

That's a business, not technology, problem - one that voice AI agents are solving for 500+ enterprises right now.

In Haptik's deployments, the average FAQ call handled by a voice AI agent takes 1.8 minutes. The same call through IVR takes 4.5 minutes. The difference is the customer experience, the cost structure, and whether the person on the other end picks up the phone again.

This guide explains what voice AI agents actually are, how they work, where they're being deployed in India today, and what it takes to deploy one at enterprise scale.

Table of Contents

What Is a Voice AI Agent?

A voice AI agent is software that holds a real spoken conversation with your customer - not by following a menu or a script, but by understanding what they mean, deciding what to do about it, and executing that action in real-time.

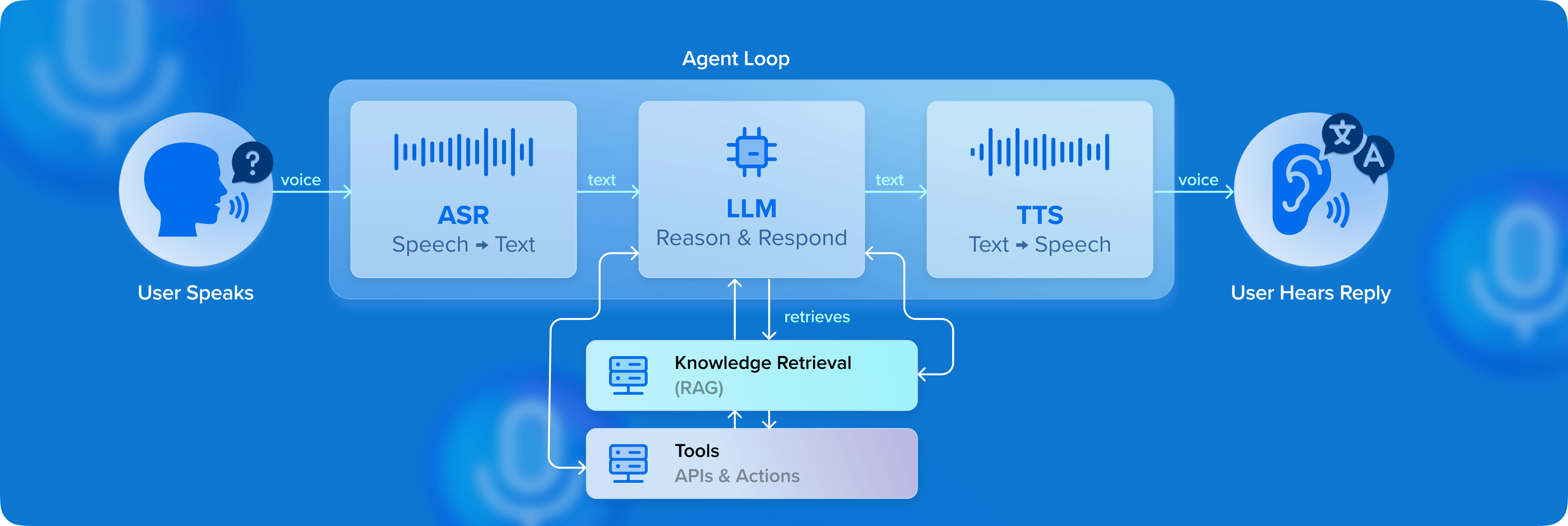

It hears what your customer says (ASR). It understands what they mean (LLM reasoning). It checks your systems (CRM, ticketing, payments). It responds in natural speech (TTS). The entire cycle happens in under 500 milliseconds.

At a functional level, an AI voice agent:

- Handles multi-turn conversations without predefined flows

- Interprets user intent even when phrased ambiguously

- Maintains context across the interaction

- Decides the best action dynamically

- Executes backend operations (API calls, workflows, transactions)

- Adapts responses based on user history and state

Thus, instead of automating responses, enterprises now automate conversations and outcomes.

What Are the Key Benefits of a Voice AI Agent?

Voice AI agents change how enterprises scale conversations, operate contact centers, and deliver customer experience.

1. Scalability

Traditional contact centers scale with headcount. Voice AI agents handle thousands of concurrent conversations without adding proportional cost, enabling enterprises to manage peak volumes and seasonal spikes without expanding teams.

2. Faster resolution

Voice AI agents don’t wait in queues or transfer between teams. They access systems instantly and move conversations forward without delays. The result is quicker resolutions and shorter call durations.

3. 24/7 availability

Customers don’t operate on business hours. Voice AI agents provide round-the-clock support without requiring night shifts or additional staffing, improving accessibility while keeping costs predictable.

4. Higher agent productivity

Voice AI doesn’t replace human agents but offloads repetitive work. By handling high-volume queries, it allows human agents to focus on complex, high-value interactions where judgment and empathy matter most.

5. Measurable and optimal Performance

Every interaction is trackable. Enterprises gain visibility into what’s working, where conversations fail, and how performance evolves - making continuous improvement a built-in capability, not an afterthought.

ALSO READ: Beyond Accuracy: The 7 Metrics That Actually Define Voice AI Performance

How Voice AI Agents Work Under the Hood

Speech recognition (ASR):

The interaction starts with real-time transcription of spoken input into structured text. Modern systems are optimized for low latency, accent and dialect variation, and noisy, real-world environments.

Reasoning layer (LLMs):

The transcribed input is processed by a reasoning layer that identifies intent, interprets context, and plans the response or action, triggering a shift in the system from recognition to understanding.

Knowledge retrieval and grounding:

The agent pulls from your CRM, knowledge base, or transaction system to answer with your data. This is what separates an enterprise voice agent from a generic AI assistant.

Orchestration and decisioning:

It decides whether to respond, ask a follow-up, or execute an action, which backend systems to trigger, and how to manage conversation state.

Speech generation (TTS):

The final response is converted into natural-sounding speech, with modern TTS systems delivering human-like tone and pacing, multilingual adaptability, and context-aware delivery.

Where Indian Enterprises Are Deploying Voice AI Agents

Voice AI agents are in production across high-impact workflows spanning support, collections, sales, and proactive engagement.

What stands out are the outcomes they drive from faster resolution and improved conversions, to more efficient operations at scale.

| Use Case |

Vertical | Haptik Outcome |

| Lead qualification | Real Estate | 8-10% conversion uplift |

| EMI reminders + collections | BFSI | 85-92% cost reduction vs human agents |

| Admissions enquiry handling | Education | 50,000+ calls automated per season |

| Appointment scheduling | Healthcare | 24/7 availability, zero hold time |

| Post-purchase support | Retail/Ecommerce | 30-40% support volume reduction |

| Loyalty program | Travel & Hospitality | 10-15% increase in revenue via AI recommendations |

How to Identify Whether a Voice AI Deployment Works

A voice AI agent working in a demo is different from one in production. The real test lies in how consistently it handles real conversations, integrates with systems, and delivers measurable outcomes at scale.

1. Latency

A system that averages 500ms but spikes to 2-3 seconds under load will increase call abandonment, trigger repeat inputs, and degrade resolution rates even if the AI's answers are correct. That’s why consistency matters more than averages. The system needs to respond quickly and reliably, even during peak traffic, without breaking the flow of conversation.

2. Interruption handling

This is the moment customers decide if they trust the agent. People don’t wait for a system to finish speaking; they interrupt, correct themselves, or change direction mid-conversation. A voice AI agent must handle this naturally: pause, adapt, and respond to the new input without losing context.

3. Multilingual and code-switching

In India, mid-sentence language switching is the default. Users naturally blend languages based on comfort, context, or even the specific word they’re looking for. A voice AI agent needs to follow this fluidity and understand mixed-language inputs before responding in a way that is equally natural.

READ: How Multilingual Voice Agents Break Language Barrier

4. System integration

Without deep integration, voice agents stay as informational interfaces. With it, they become transactional systems that drive real outcomes.

5. Orchestration

It’s where most enterprise deployments quietly fail. When a customer asks to reschedule a delivery, the agent understands the request, confirms a new time, but fails to update the backend system. The user hears a successful response, but the actual change never happens. That gap between conversation and execution, is where trust breaks.

Why Voice AI Requires a Platform Approach

Voice AI agents sit on top of data, workflows, systems, and channels that all need to work together in real time. Treating voice as a standalone tool limits what it can actually deliver. To drive real outcomes, it has to be part of a broader system.

Conversations are only one layer

A voice AI agent that understands a user query is only scratching the surface.

Real resolution depends on:

- Pulling customer context from a CRM

- Triggering workflows across systems

- Updating transactions or tickets in real time

In production deployments, this is where most gaps emerge.

Across large-scale CX implementations, we’ve seen that over 60% of interactions require backend action to reach resolution. Without deep integration, these interactions stall and trigger escalation to human agents. This is the difference between answering a query and actually solving it.

Fragmentation breaks the experience

Enterprises often deploy voice, chat, and messaging as separate systems.

The result is predictable: Conversations don’t carry forward, context resets, and customers repeat themselves across channels.

In high-volume environments, this impacts outcomes:

- Longer resolution times

- Higher drop-offs

- Lower customer satisfaction

In contrast, unified deployments where voice is part of an omnichannel system consistently show:

- 20-30% reduction in repeat interactions

-

Higher containment with better CSAT outcomes

The difference between a tool and a system

Point solutions are optimized for specific tasks. A tool automates a use case whereas a system manages a journey.

At scale, this distinction is measurable:

- Does the agent trigger real actions or just respond?

- Is context maintained across interactions?

- Are workflows coordinated or fragmented?

This is where Haptik’s approach is fundamentally different.

Voice AI agents aren’t built as standalone modules but operate within a broader conversational AI platform that brings together:

- Omnichannel interactions (voice, chat, messaging)

- Workflow orchestration and backend integrations

- Smart Agent Assist for human + AI collaboration

- Voice Campaign Manager for proactive engagement

Across deployments, this approach has enabled:

- Higher automation rate in high-volume support workflows

- Higher conversion rates in conversational journeys

- Significant reduction in operational cost per interaction

How to Evaluate a Voice AI Platform

When systems move from controlled environments to real usage, patterns emerge:

- performance varies under load

- language handling breaks in edge cases

- workflows don’t always complete as expected

- ownership becomes unclear after go-live

READ: Why Traditional QA Frameworks Fail for Voice AI Agents

Over time, they define whether a deployment scales or stalls.

What enterprises eventually realize is that voice AI is less about initial capability and more about how the system behaves under pressure, across use cases, and within existing workflows.

This is the lens Haptik is built around:

- A focus on measurable outcomes

- Systems designed for consistency at scale

- Native handling of multilingual, real-world conversations - with support for Indian languages, accents, and dialects

- Ongoing involvement through forward-deployed teams

- Experience across industries with proven, production-grade use cases

Because the real evaluation happens after go-live. And that’s where the right platform tends to become obvious.

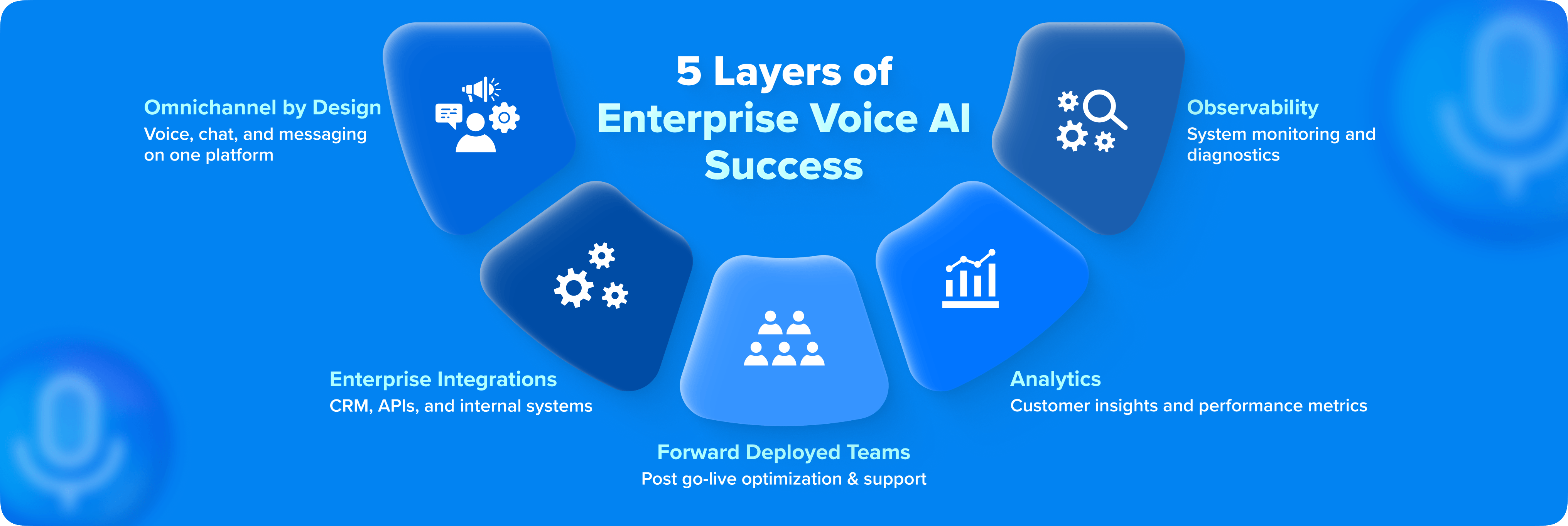

How Haptik Approaches Enterprise Voice AI

At a time when voice AI is a capability, we treat it as an operating system of CX.

Omnichannel by design: Voice, chat, and messaging on one platform

When voice, chat, and messaging operate on separate stacks, context fragments. Customers repeat themselves. Journeys break midway.

Haptik approaches this differently. Voice is not an add-on; it sits within a unified conversational layer that spans:

- Voice, chat, and messaging

- AI agents and human agents

- Inbound and outbound interactions

This ensures that context persists across touchpoints.

In large-scale deployments, this directly impacts outcomes:

- Lower repeat interactions

- Higher resolution rates

- More consistent CX across channels

Deep enterprise integrations

In enterprise environments, execution is the real bottleneck. Most Voice AI systems can interpret intent. Far fewer can reliably fetch the right customer state, trigger multi-step workflows, and complete transactions across systems

Haptik is designed with integration depth as a first principle. The platform connects directly with core enterprise systems like CRM, ticketing, payments, and internal APIs, so that conversations translate into actions.

Across deployments, we see that a majority of interactions require backend execution to reach resolution. Without this layer, automation plateaus early.

The result is a system that doesn’t just respond accurately but completes the task.

Forward deployed teams (we don’t leave after go-Live)

Enterprise success depends on post-deployment evolution.

Voice AI systems degrade if they are not actively managed:

- New edge cases emerge with scale

- User behavior shifts over time

- Workflows evolve with business needs

Haptik operates with forward-deployed teams that stay embedded beyond launch. The focus shifts from implementation to:

- Improving resolution rates

- Expanding automation coverage

- Continuously refining workflows and prompts

This is how systems move from handling a subset of queries to becoming a core part of CX operations.

Analytics and observability

Most voice AI deployments are measured using metrics that don’t reflect reality.

Containment rates, for instance, can look strong even when:

- Users drop off mid-conversation

- Queries are only partially resolved

- Escalations happen outside tracked flows

Haptik approaches observability differently. The focus is on:

- Resolution rates

- Where conversations break

- Business outcomes - conversions, collections, cost per resolution

- System-level bottlenecks across integrations and workflows

This shifts Voice AI from a black box to an observable system. Because the real question is not “Did the agent respond?” It is “Did the system deliver the outcome?”

Final Thoughts

The enterprises winning with voice AI in 2026 are the ones operationalizing conversations at scale.

They’ve figured out which interactions can be handled reliably by AI agents, and where human intervention still adds value. That balance between automation and human judgment defines modern CX.

Across industries - from retail and eCommerce to BFSI, education, and real estate - this shift is underway. Haptik is at the center of this transition through building, deploying, and scaling AI-powered voice, chat, and messaging solutions for 500+ enterprises across channels like WhatsApp, web, RCS, and more.

FAQs On Voice AI Agents

See what a Haptik voice AI agent sounds like on a real call from your industry. Book a 20-minute live demo.

source on Google